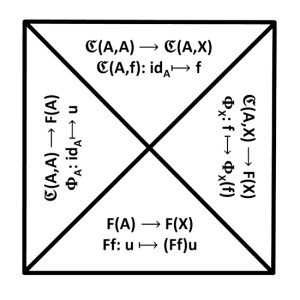

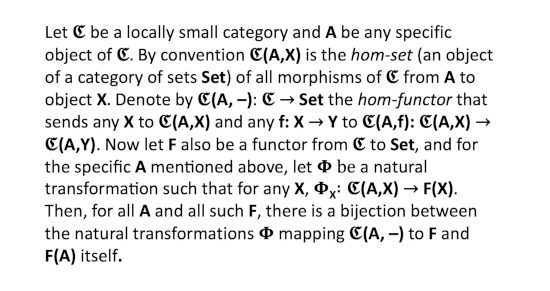

I’ve been wanting to post about the Yoneda Lemma of Category Theory for a while, and finally decided how to draw my little diagram. Next, I will sketch out the fundamentals of the lemma for you (not the proof though) even though there are many better presentations out there.

I’ve been wanting to post about the Yoneda Lemma of Category Theory for a while, and finally decided how to draw my little diagram. Next, I will sketch out the fundamentals of the lemma for you (not the proof though) even though there are many better presentations out there. Then, oddly enough, I wondered what if anything the lemma could tell us about metaphysics. I know this is like using Godel’s Theorem to say things about epistemology (and so a category mistake, ha ha), but I still think it’s worth a thought or two. Apparently others have explored the same idea.

Then, oddly enough, I wondered what if anything the lemma could tell us about metaphysics. I know this is like using Godel’s Theorem to say things about epistemology (and so a category mistake, ha ha), but I still think it’s worth a thought or two. Apparently others have explored the same idea.

First, one would have to assume that reality can be modeled by some Category, so the Yoneda Lemma could apply to it. This may be in fact be wrong, but apparently Category Theory has been used to model various physical subsystems. If we just go ahead and assume it can, what could the Yoneda Lemma tell us?

- The Yoneda Lemma suggests an object’s essence (nature, being, identity) is entirely defined by its relationships (called morphisms or arrows in Category Theory) with all other objects in its system (i.e., its category), effectively equating an object with its web of connections. Metaphysically, this supports a structuralist or relational view where an entity has no intrinsic nature independent of its context.

- The lemma shows that knowing how an object relates to all other objects is equivalent to knowing the object itself. And so if two objects have identical relationships with all other objects, then they are functionally identical (isomorphic). This supports the idea that the existence of a thing is defined by structural behavior rather than intrinsic substance.

- The lemma works by embedding objects into relational mappings via their “view from elsewhere”—how they appear from the perspective of every other object. This could support a metaphysics where reality is fundamentally perspectival: objects are constituted by the totality of possible perspectives on them. There’s no “view from nowhere” that captures what something is independent of its potential relations.

The lemma was named after Nobuo Yoneda (1930-1996), a Japanese mathematician and computer scientist.

Further Reading:

https://en.wikipedia.org/wiki/Yoneda_lemma

https://ncatlab.org/nlab/show/Yoneda+lemma

https://blog.juliosong.com/linguistics/mathematics/category-theory-notes-14/

https://www.math3ma.com/blog/the-yoneda-lemma

https://curtjaimungal.substack.com/p/you-are-dual-to-everything-the-yoneda

https://temnoon.com/the-essence-of-relationality-yoneda-lemma-and-the-jeweled-net-of-indra/

https://en.wikipedia.org/wiki/Indra%27s_net

https://equivalentexchange.blog/2014/02/01/relations-all-the-way-down/

[*12.58, *14.160]

<>